When a sound rotates between loudspeakers, something more than position changes — its timbre changes too. Frequencies emphasize and recede as the source moves through space, and that spectral evolution becomes a compositional material in its own right. This is spectral spatialization: the integration of spectrum and space as structural elements of a work, rather than treating spatialization as a layer applied on top of finished sound. 1

Spatialization first became a compositional concern with Stockhausen's Gesang der Jünglinge (1956), but the spectral dimension of spatial movement has been largely unexplored. This article examines several techniques developed in Max/MSP — including cross-synthesis and spectral shredding — tested using EARS' twelve-channel system. 2

Spectral Domain.

A periodic wave is a wave that repeats itself. Although natural phenomena seem to be governed by chaos, patterns of repetition make them comprehensible to us. Seasons repeat annually, Comet Halley appears every 75-76 years, and water follows a continuous cycle of precipitation and evaporation. At a smaller scale, mechanical waves - oscillations that travel through space and time - serve as fundamental components of nature, transporting energy from one place to another.

We have evolved to perceive the types of waves that manifest in the form of light and sound with our eyes and ears respectively. They differ in the way they propagate, their frequency content and length. Visible light is electromagnetic radiation with waves in the frequency range of 405 THz to 790 THz with a wavelength in a range from 380 nanometers (nm) to about 740 nm. If light travels through a prism, it decomposes into its constituents colors; it was Isaac Newton who discovered that light could be reconstituted back into its original form if passed through a prism again. We cannot use a prism to decompose sound into its sinusoidal components but there is a mathematical device: the Fourier Transform, which can deconstruct a sound wave and under certain conditions, the same wave can be mathematically reconstructed using the Inverse Fourier Transform.

Joseph Fourier (1769-1830), a French mathematician and physicist, developed his transformations while studying heat propagation. He demonstrated that any periodic function could be expressed as an infinite sum of simpler sinusoidal waves. The Fourier series decomposes periodic functions into the sum of an infinite set of sine and cosine waves:

$$f(t) = a_0 + \sum_{n=1}^{\infty} (a_n \cos(n\omega t) + b_n \sin(n\omega t))$$

or equivalently, using complex exponentials:

$$f(t) = \sum_{n=-\infty}^{\infty} c_n e^{in\omega t}$$

where $a_n$ and $b_n$ are the amplitude coefficients of the constituent waves, $\omega$ is the fundamental angular frequency, and $t$ represents time.

The Discrete Fourier Transform (DFT) has its foundations in the Fourier Series and provides a way to analyze digitally stored audio signals. To analyze music digitally, we need software or hardware systems that can emulate aspects of human hearing. A fundamental requirement is the ability to detect temporal events and their repetition rates, as these elements form the basis of musical structure - from Indian tala rhythmic cycles to the formal structures of classical sonatas. The Fast Fourier Transform (FFT) is an efficient algorithm for computing the DFT. For time-localized frequency analysis, we use the Short-Time Fourier Transform (STFT), which essentially applies the DFT to short, overlapping segments of the signal. The DFT converts a finite, discrete signal into a sum of sinusoidal components, providing magnitude and phase information for each frequency bin. Given a continuous-time signal $x(t)$, its Fourier Transform $X(f)$ is: $$X(f) = \int_{-\infty}^{\infty} x(t)e^{-j2\pi ft},dt$$ And its inverse: $$x(t) = \int_{-\infty}^{\infty} X(f)e^{j2\pi ft},df$$ For discrete signals, the DFT is defined as: $$X[k] = \sum_{n=0}^{N-1} x[n]e^{-j2\pi kn/N}$$ where:

$k = 0,1,...,N-1$ represents the frequency bin index $N$ is the length of the input sequence $x[n]$ is the discrete input signal $X[k]$ represents the complex frequency spectrum

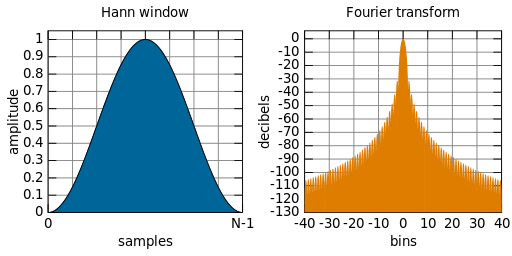

Since Fourier analysis assumes infinite periodic signals, we must carefully handle finite segments. This is accomplished through windowing - multiplying the signal by a window function that smoothly transitions to zero at its edges. Common window functions include:

- Hann window

- Hamming window

- Blackman window

These windows help minimize spectral leakage - unwanted frequency components that appear due to the signal truncation. For better time localization, these windows are typically overlapped by 50-75% of their length. Modern audio programming environments like Max/MSP, Pure Data, CSound, and SuperCollider implement efficient FFT algorithms that handle these complexities. The DFT differs from the continuous Fourier Transform in three key aspects:

-

It operates on discrete-time sequences, making it suitable for digital audio

-

It uses summation rather than integration, enabling efficient computational implementation

-

It processes finite-length sequences, eliminating the need for infinite-time function definitions

Windowing

We can use the Fast Fourier Transform to analyze a large work or piece of music to understand its form or key features. It can be used to analyze musical works at various time scales to reveal different aspects of their structure and the choice of window size fundamentally affects what information we can extract from the analysis. When analyzing longer segments of music, we face a fundamental trade-off:

-

Long windows provide:

- Better frequency resolution (ability to distinguish between close frequencies)

- Poorer time resolution (less precise information about when events occur)

- Greater computational demands

- Potential for numerical precision issues

-

Short windows provide:

- Better time resolution (more precise timing of events)

- Poorer frequency resolution

- More efficient computation

- Better suited for real-time analysis

For non-real-time analysis, we can use longer windows than would be practical in real-time situations, but we still need to consider:

- Memory limitations

- Computational efficiency

- The Gabor-Heisenberg uncertainty principle (which states we cannot simultaneously have perfect time and frequency resolution)

- The specific requirements of our analysis (whether we need precise timing, precise pitch, or a balance of both)

According to Curtis Roads:

A spectrum analyzer measures not just the input signal but the product of the input signal and the window envelope. The law of convolution, states that the multiplication in the time-domain is equivalent to convolution in the frequency-domain. Thus the analyzed spectrum is the convolution of the spectra of the input and the window signals. In effect, the window modulates the input signal, and this introduces sidebands clutter into the analyzed spectrum.

A smooth bell-shaped window minimizes the clutter. (Roads, 2004)

As mentioned above, windows such as Hann, Hamming, Gaussian, Blackman-Harris are commonly used for analysis and resynthesis in music. One can always try different type of windows and decide what is more convenient for the type of analysis to be done.

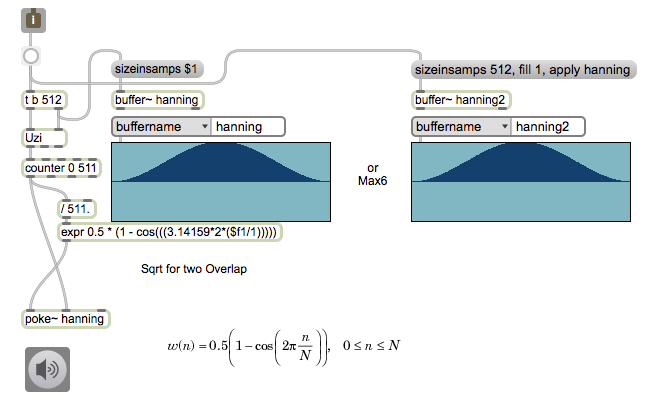

Window creation with Max/MSP

In Max/MSP it is possible to create a window by writing the values of a function into an object that stores audio samples. In the following example, a window of size 512 values is generated using the hanning function:

$$\omega(n) = 0.5 \left(1-\cos \left(2\pi \frac{n}N \right)\right)$$

If the sample rate is 44100 and the FFT size is 1024, then a window that is half the FFT size (512) creates band sizes of approximately 86 Hz.

$$\frac{44100}{512} = 86.15$$

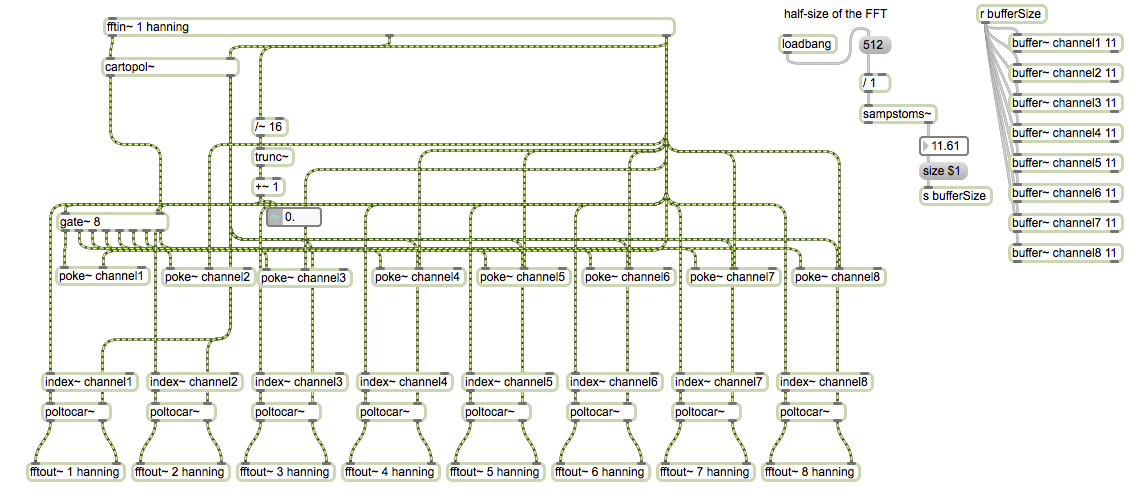

FFT in Max/MSP

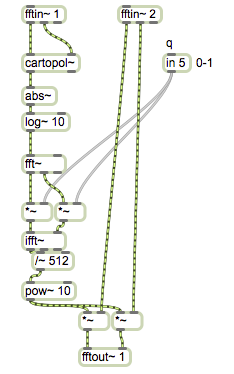

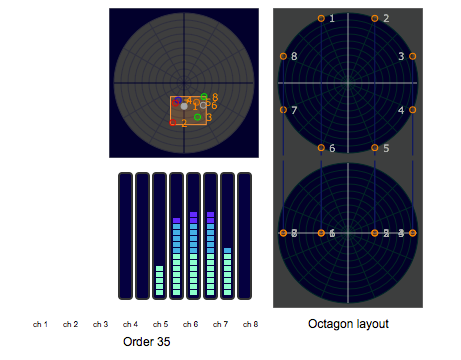

If a window is created with a function, a way to perform an FFT in Max is to use the [fft~] object and multiply the incoming signal by the window. Another option is to use a patcher loaded by the [pfft~ subpatch] object. Inside the patcher there should be an [fftin~ hanning] object with an argument specifying the desired window type. If using the pair [pfft] $\longrightarrow$ [fftin] , three elements can be obtained from the signal: a real part, an imaginary part and the FFT bin index. These are the three outputs of the [fftin~ hanning] object inside the [pfft] patch. With the real and imaginary signals then it is possible to convert to polar form and the opposite: from polar back to cartesian before the [ifft~] object. In polar form the signal becomes magnitude and phase. The third outlet is very useful for analysis, storing or displaying a signal. The processing to generate a spectral spatialization requires dividing the spectrum into eight separate channels. By knowing the FFT bin index, it is possible to gate the signal to a specific buffer when a particular index has been reached. Moreover, the signal can be stored on eight different buffers that can be read and output to time-domain with eight different [fftout- hanning] objects. Each output of the [pfft~ subpatch] is sent to an Ambisonics encoder for further spatialization.

Cross-syntesis and Spectral Shredding

Cross-syntesis describes a number of techniques that in some way combine the properties of two sounds into a single one. Convolution is a special case of cross-syntesis and serves as a bridge between time-domain and spectral-domain. In signal processing, Convolution is the multiplication of two spectra. The Law of Convolution states the following:

Convolution in the time domain is equal to multiplication in the frequency domain, and vice versa (Roads, 2004) .

There are several techniques used to combine aspects of two sounds. For example, IRCAM's Audiosculpt 3 offers three different types:

-

cross-syntesis by mixing

-

source-filter cross-syntesis

-

generalized cross-syntesis

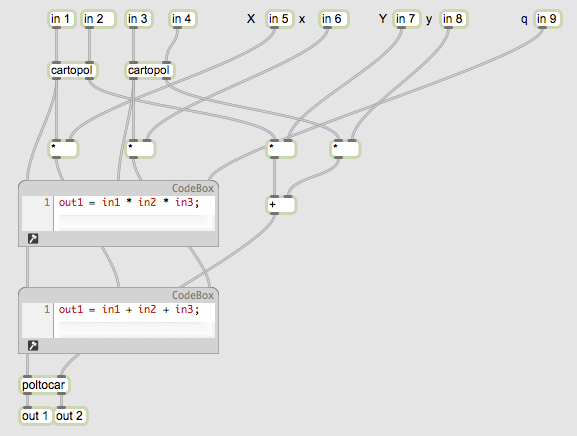

In addition, cross-syntesis S of two sounds $ S_1, S_2$ with corresponding spectra M and phase $\theta $ can be calculated in several ways:

-

summation: $ S= S_1+S_2$ Same as cross-syntesis by mixing, while the amplitude of S1 increases, the amplitude of S2 decreases.

-

convolution: $ M= M_1M_2, \theta=\theta_1+\theta_2$. Multiplication of magnitudes and summation of phases after FFT and conversion from cartesian to polar.

-

cross-modulation: $M=M_1$, $\theta=\theta_2$. Magnitudes of $S_1$, phase of $S_2$

-

square-root convolution: $M= \sqrt{M_1M_2}$, $\theta=\frac{1}{2}(\theta_1 + \theta_2)$

-

cross-product #1: $M=M_1M_2, \theta= \theta_1$

-

cross-product #2: $M=M_1M_2, \theta= \theta_2$

The first technique: summation or cross-synthesis by mixing, involves mixing of two sounds, that is cross-changing their gains in the time-domain. A spatialized summation would involve fading in and fading out the two sounds respectively. Although there is no spectral processing involved, it can give the listener the impression of sounds morphing into different sounds and space.

The rest of the techniques are performed in the spectral domain after analyzing the signal with an FFT and converting the sines and cosines to amplitudes and phases. In other words, converting from cartesian to polar coordinates. These techniques differ in the way the amplitudes and phases are combined. Operations between magnitudes are multiplicative while phases are subject to addition.

Source-filter cross synthesis requires an extra step taking the signal to the cepstrum domain. The word cepstrum is a rearrangement of the word spec. In order to enter the cepstrum domain the following steps are required:

$x(n)\longrightarrow \boxed{\text{FFT}} \longrightarrow \boxed{\text{abs()}} \longrightarrow \boxed{\text{log()}} \longrightarrow \boxed{\text{iFFT}} \longrightarrow c_x(n)$

In Max, particularly with the introduction of the gen~ object, real-time sample-level audio processing has become possible. This capability enables real-time spectral spatialization through the synchronized cross-synthesis of multiple audio sources as they move between loudspeakers. The process creates dynamic spatial relationships while maintaining spectral integrity across the sound field. 4

In addition, by combining any of these techniques with spectral shredding it is possible to create spectral spatialization at different times between portions of the spectrum.

Tools

Several aspects of spatialization and parameters can be modified in real-time using software tools.

-

speed of circular motion of a sound source including Doppler effects

-

spatial trajectories of sound sources

-

behavior of sound source e.g. swarm

-

directionality (Ambisonics order)

-

the creation of a broad sound image

-

the creation of an acoustic environment using time shifts (filters) and delays.

Perceptual parameters include:

-

azimuth and elevation

-

source presence and distance attenuation

-

room presence

-

reverberation

-

envelopes

DSP parameters include:

-

equalization

-

Doppler effect

-

air absorption

-

reverberation

-

directional distribution of various speaker layouts e.g. hexagon

-

delay

Many of these parameters can be set in IRCAM's spat and ICST's Ambisonics Tools for Max/MSP.

Circular Motion (aesthetics)

The ability to localize sounds in space is affected by the spectral content of the sound itself, especially its attack. The author has found that lower frequency sounds tend to be harder to localize while sounds of crisp, sharp nature are preferred for directionality and long, continuous sounds with complex spectra for spectral morphing. Although there is an aesthetics of circular motion mainly established as a product of a functional design inspired by the square and rectangular shapes of studios such as the WDR, the space created by moving sounds around an audience is perceived as circular. 5

The reason for this might be attributed to Stockhausen's Ratationstisch (rotating table), a device with a movable loudspeaker attached to a round table capable of rotating 360$^\circ$. As the table moved, sounds were captured by an octophonic or quadraphonic array of microphones. The perceptions associated with the Ratationstisch created an aesthetics of circular sound space which include collateral effects seldom considered by authors. For example, noise, air and Doppler effects generated by the movement of the table itself.

In his work Oktophonie (1990-1992), Stockhausen added a new dimension to spatialization by designing a cube array of eight equidistant speakers, four on the floor and four suspended.

The piece features different kinds of rotations and also several types of sound movements between the four vertices and the six faces of the cube, such as spiral and diagonal displacements.

Although different types of rotations were used for Oktophonie, it appears that, upon listening, none of these methods were particularly effective for the listener to be able to localize sound sources accurately. This limitation highlights the importance of spectral spatialization, which can enhance the clarity and spatial perception in a musical work. These techniques can provide more precise and discernible spatial cues, allowing listeners to better identify the location and movement of sounds within the acoustic space. (Stockhausen, 1994)

The following techniques have been implemented in Max/MSP and tested in multichannel performance contexts.

Stereo Cross-Synthesis

Two sound sources are blended using FFT-based analysis and resynthesis. After both signals are transformed to the spectral domain, their magnitudes and phases are combined using the cross-synthesis methods described in the previous section. A single parameter controls the morphing between the two sources, allowing smooth real-time transitions between hybrid timbres.

Spatialization Techniques

Basic rotation distributes a single source across an array of eight speakers by manipulating amplitude envelopes in sequence. Swarm behaviour assigns independent trajectories to multiple simultaneous sources, each following a slightly offset path and phase, creating the impression of a cloud of sound moving through the space rather than a single localized object.

Sound trajectories allow sources to follow predefined paths — arcs, spirals, diagonal displacements — drawing on the same path data used in Stockhausen's Oktophonie cube geometry. Combined movements layer rotation, swarm and trajectory techniques simultaneously, producing spatial textures that resist simple localization and instead create a sense of enveloping density.

All spatialization is encoded using ICST's Ambisonics objects for Max/MSP, which handle the encoding, manipulation and decoding of spatial audio with support for variable Ambisonics order.

Conclusion

The use of spectral spatialization has great potential for adding clarity and meaning to music. While spatialization focuses on the movement of a sound object, spectral spatialization becomes a structural part of the work. The author has developed various cross-synthesis techniques and the concept of spectral shredding. While the computer language Max/MSP is oriented to real-time audio processing, the use of these techniques for acousmatic composition cannot be discarded.

The term spectral spatialization was introduced by the author in 2013 during his doctoral studies.

Experimental Acoustic Research Studio. University of California Riverside.

Short Time Fourier Transform

Audiosculpt is a software for viewing, analysis and processing of sounds by IRCAM. Among other features, it offers cross-syntesis powered by SuperVP, IRCAM's phase vocoder analysis engine. More information here: http://anasynth.ircam.fr/home/english/software/Audiosculpt

Max/MSP's compiles in real time any visually generated code. Code generated with can be exported to C++

Founded in 1951, the Studio fur Elektronische was located in the main building of the WDR in downtown Cologne, later, in 1987, to be moved to the south of Cologne near the Rhine.